Peer review is the quality control feature of academic publishing. An Elsevier study found 82% of researchers agreed peer review is indispensable for maintaining control of scientific communication. Peer review gives validity to the science, which is so vital in the age of disinformation.

But it’s not perfect and it has its limits.

Few researchers will argue with the fact peer review is critical, but many agree the current system has flaws.

The greater publishing community is addressing some of these shortcomings with new models of peer review and emerging models such as preprints and open science. So it’s important to reflect on the limitations of peer review.

Especially if you’re a younger/newer researcher, when you know the limits of peer review, you better understand your own duties as a responsible scientist. That’s good for you, your field, and for science in the greater public.

What peer reviewers DO do

This article is about the limits of peer review. If you know what peer review should do, then you can better understand what it cannot do.

Peer reviewers generally:

- Determine if a manuscript will be of interest to readers

- Find if a study is novel, either through new findings or by adding to existing findings

- Determine the impact of a submission

- Seek to identify plagiarism

- Try and identify false or made-up data and findings

- Examine the scientific basis of findings, conclusions, and recommendations to be sure they’re valid

- Check the writing quality in terms of readability, clarity, and suitability of tone

That’s not a comprehensive list, either. Peer review is so vital, it was mentioned time and again in the hit movie “Don’t Look Up” as synonymous with valid research. It may have been an overstatement.

Peer review’s duties are a lot to ask of a volunteer, and it can take a while to do it. How well they do this, how much time they devote to it, and if it “succeeds” depends on factors like the time and effort they put into it, familiarity with the topic, and, sadly, personal biases.

Humans are imperfect, and peer reviewers are humans, after all.

What peer reviewers DON’T do

As shown above, there’s a lot that’s expected of peer reviewers. With such a big shopping list, there is plenty that they cannot do. These are the limitations of peer review. They include the following.

Check statistics

Peer reviewers are just that: your peers. That doesn’t imply they’re statistical experts (unless, of course, you’re researching statistics). Therefore peer reviewers are not experts in all types of statistical analysis.

They likely know the common and appropriate tests for the types of data generated in your field. In STEM and in quantitative areas of HSS, they likely know a one-way ANOVA from a Kruskal–Wallis or a chi square.

They will generally only be able to identify obvious errors in test choices or results. Peer reviewers don’t re-run calculations (unless a glaring error shows up and they know how to check it quickly), and they’re not expected to do so.

Examine raw data

Peer reviewers also don’t check raw data. Or, at least, they’re not typically expected to do so.

This would make the review process very cumbersome and even more time-consuming than it already can be. And it can take a while.

So, any issues with the original data collected may not be apparent in the draft the manuscript reviewers see.

If they do find issues with the statistical analysis, their suggestions for correction may be limited and it’s the author’s responsibility to go back and correct the issue(s) using the original data. And then write a thorough and convincing response letter to the request for revisions.

Redo experiments, test reproducibility

Much like checking all of the raw data would be unrealistic to ask of a reviewer, the same goes for redoing experiments.

Reviewers do check that a manuscript provides sufficient details so that they could recreate the experiment if they wanted to. But the actual verification of this is well beyond the scope of the peer review “service.”

Verify author details

Defining and identifying appropriate authorship is an ongoing area of concern in scholarly publishing. Some manuscripts have been found to have listed fake authors and affiliations or authors that didn’t even know they were included on the paper.

One fascinating study from Chile even explored Scopus-indexed articles to uncover this practice at a scientific level.

Peer reviewers may be familiar with the researchers listed on a manuscript, but more often than not, they aren’t, or they’re reviewing a blinded manuscript (they don’t know who wrote it).

Checking that all listed authors are real and that the affiliations are correct would be a very difficult task and one that wastes the expertise offered by the reviewers. So, the way things are right now, it’s up to the authors to be honest. Systems like the ORCiD identifier are emerging and proving valuable in this area.

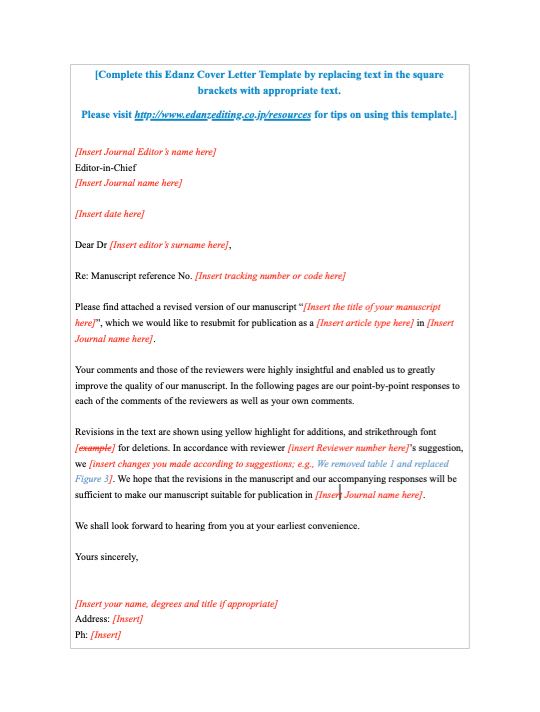

Peer review response letter template

Struggling to write that letter to the editor and peer reviewers? Try this free template! Simply replace the placeholder text with your own information, edit, and send with your detailed responses!

★ Pro Tip: Remember to double-check the spelling of the journal editor’s name!

Verify conflicts of interest

Conflicts of interest (COIs) have strong effects on people’s perceived bias in a study. So it goes without saying that declaring potential COIs is essential for every manuscript.

That said, it would be next to impossible for peer reviewers to verify and research the validity of an author’s declarations or find COIs that they may have omitted.

Reviewers will check that declarations have been made as a matter of course and flag any related bias in the manuscript they find, but this is the extent of their expected role in this area.

Good researchers help offset peer review’s limitations

In an ideal world, all of these things could be caught before articles are published, but it’s just not realistic with the current system in place.

Peer review is still an essential check and helps strengthen articles and catch major flaws that could influence the interpretation of the results.

Keeping this things in mind will, hopefully, help you realize that no study you read is perfect. But the more people that publish in an area, the better we’ll all get at deciphering which studies have more impact than others.

Use a critical eye when reading articles and don’t be so quick to attack the entire publishing system when flaws are found post-publication.

As a researcher, check your data scrupulously, clarify your understanding of authorship, and do good work.

Slowly but surely, new models of peer review will emerge and some day the above list may be a thing of the past.

To get a step ahead on the peer review process, you can get your manuscript professionally edited by an Edanz expert, send it for our Expert Scientific Review (essentially a peer review before the peer review), and choose a journal that’s suitable for your work.

You can also train yourself up on all steps of the research cycle by signing up for the Edanz Learning Lab.